Google has launched the AIY (AI yourself) Vision Kit that lets you turn Raspberry Pi equipment into an image-recognition device. The vision kit is powered by Google’s TensorFlow machine-learning models and will soon gain an accompanying Android app for controlling the device.

Earlier this year they also introduced the AIY voice project, which allowed makers to turn a Raspberry Pi into a voice-controlled assistant, using the Google Assistant SDK.

The Vision Kit features “on-device neural network acceleration”, allowing a Raspberry Pi-based box to do computer vision without processing in the cloud. The AIY Voice Kit relies on the cloud for natural-language processing.

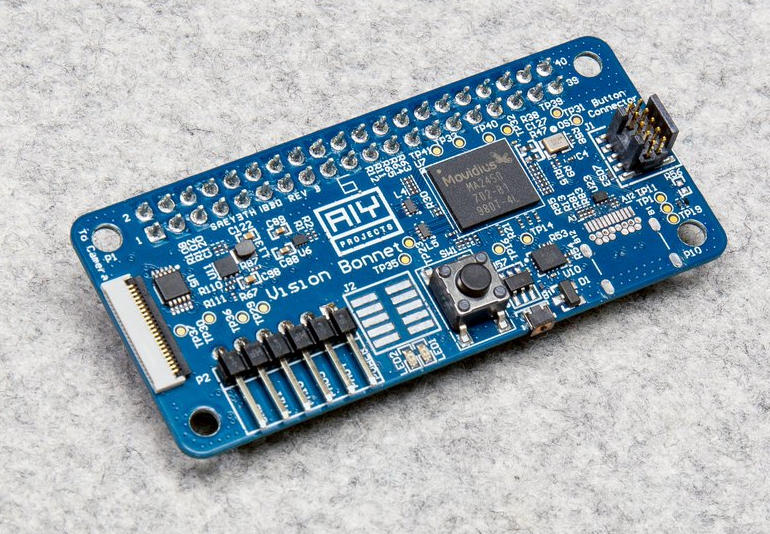

Makers will need to provide their own Raspberry Pi Zero W, a Raspberry Pi camera, a 4GB SD card, and power supply. The Vision Kit itself includes the VisionBonnet accessory board, cables, the cardboard box and frame, lens equipment, and a privacy LED to tell others when the camera is on.

The VisionBonnet board was developed by Google and is powered by Intel’s Movidius MA2450 vision processing chip. This chip is the secret sauce of the Vision Kit. It is 60 times faster at performing computer vision than if it were to rely on a Raspberry Pi 3. The VisionBonnet is connected to the Raspberry Pi Zero W by a cable supplied in the kit.

Vision Kit makers can use several neural-network programs: the first one can detect when people, cats, and dogs are in view. Another neural network will detect happiness, sadness, and other sentiments. A further program, based on MobileNets, can detect 1,000 different objects such as a chair, orange, or cup.

Google hopes developers will build on these neural networks and apply them to new tasks, such as making the cat/dog/person detector recognize rabbits. To help achieve this goal, it’s provided a tool to compile models for retraining models with TensorFlow.

The Vision Kit is available from electronics seller Micro Center for $44.99 and will be available on December 31.