In many ways, the work can be described as a way of interpreting complexities in the relations between a couple, and the displacement of these complexities to the domain of creative interaction.

This is how the creator ::vtol:: explains his creation.

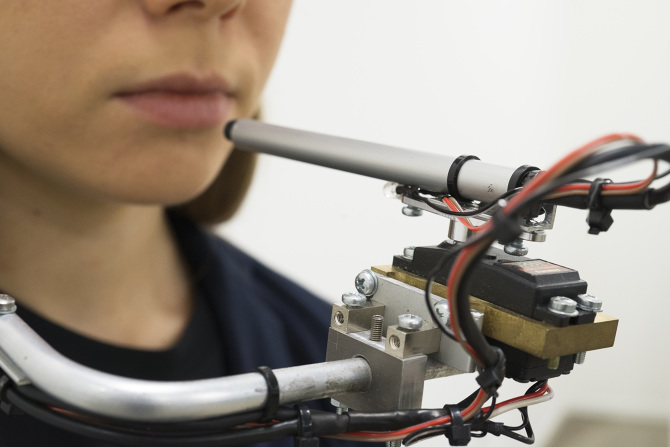

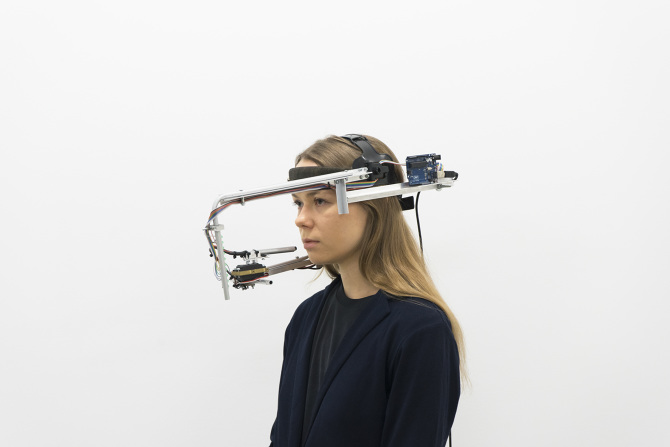

One participant wears the device, which points a directional microphone under the control of the other participant at her mouth using a servo motor. An array of LEDs signal the vocalist in a manner agreed upon before the performance.

The head-mounted system is controlled by an Arduino Uno, and is meant to display the subtle interaction between two participating artists, as they must work together to produce the desired output.

“You, me and all these machines” is a performance for voice and electronic devices. The vocalist puts on his or her head a specially designed wearable interface tool to interact with the voice and display a visual score. Technically, the device consists of several elements: a narrowly directional microphone driven by a motor; an LED strip that shows the vocalist score; remote control with a joystick used by the second participant to control the interface. Shifting the microphone against the mouth makes it possible to achieve interesting sound effects, and makes it easier to manipulate the vocalist’s voice. The LED line consisting of 10 diodes is a very primitive, but effective and convenient way of interacting with the vocalist, and the way of interpreting the values is predetermined before each performance. During the performance, a sound canvas is formed, thereby changing the dynamics, consisting of a set of looped fragments created within voice and interface processing elements, without using other methods to generate sounds.

You can see how it works in the video below. It’s strange, for sure…but it works :)